Quantum computing promises breakthroughs in cryptography, drug discovery, and complex system modeling—but there’s a problem: quantum systems are incredibly fragile. If you’re here, you likely want to understand how researchers are solving the instability and noise issues that make large-scale quantum computers so difficult to build. This article explores the latest advances in quantum error correction methods, explaining how they stabilize qubits, reduce computational errors, and move us closer to fault-tolerant quantum machines.

We break down the core principles behind leading correction frameworks, examine real-world experimental progress, and highlight what these developments mean for the future of AI, robotics, and next-generation computing. Our analysis draws on peer-reviewed research, published experimental results, and insights from active quantum labs to ensure accuracy and relevance.

By the end, you’ll have a clear understanding of why error correction is the backbone of scalable quantum technology—and what innovations are pushing the field forward right now.

Quantum computers derive power from qubits—units that can exist in multiple states at once. That superposition enables massive parallelism, but it also makes qubits exquisitely sensitive to heat, radiation, and electromagnetic interference (yes, even tiny fluctuations). Critics argue classical supercomputers are more practical and less fragile. Fair. Yet classical bits can’t factor large numbers or simulate complex molecules efficiently (Shor, 1994).

Modern labs combat instability with quantum error correction methods, embedding one logical qubit across many physical qubits. Surface codes and Shor codes detect phase and bit-flip errors without collapsing information, turning fragility into fault tolerance at practical scales today.

Why Quantum Bits Are So Unstable: Understanding Decoherence

Decoherence is the process by which a qubit loses its quantum behavior—specifically superposition and entanglement—because of interactions with its surrounding environment. In simple terms, superposition means a qubit can exist as both 0 and 1 at the same time, while entanglement links qubits so tightly that measuring one instantly affects another. However, even tiny disturbances—temperature fluctuations, mechanical vibrations, or stray electromagnetic fields—can disrupt this fragile state. According to research published in Nature Reviews Physics (2019), environmental noise is one of the primary barriers to building scalable quantum computers.

By contrast, a classical bit is stable: it’s either 0 or 1, never both. A qubit, meanwhile, is more like Schrödinger’s famous cat (yes, that paradox). The dilemma is that any unintended “measurement” by the environment collapses the superposition into a definite state, introducing computational errors.

There are two primary error types. First, the bit-flip (X-error), where a |0⟩ becomes |1⟩—similar to a classical glitch. Second, the phase-flip (Z-error), uniquely quantum, alters the relative phase between |0⟩ and |1⟩, corrupting information without changing the visible state. Consequently, researchers rely on quantum error correction methods to detect and mitigate these failures.

The Core Principle: Fighting Errors with Redundancy and Entanglement

Imagine sending a message over a crackling radio. You repeat it three times so the listener can compare versions and spot the mistake. That’s classical redundancy. If two copies match and one doesn’t, you trust the majority.

Quantum systems can’t do that. The No-Cloning Theorem says an unknown qubit cannot be perfectly duplicated. So instead of copying, we spread information.

Entanglement as the Workaround

Here’s the practical shift: In quantum error correction methods, a single logical qubit is encoded across several physical qubits using entanglement. The state isn’t copied; it’s correlated. Think of it like a three-part harmony—remove one singer and you can still reconstruct the song.

To detect trouble without collapsing the music, engineers use syndrome measurement. This checks relationships between qubits, not the qubit’s actual value.

- Prepare multiple entangled qubits.

- Measure parity between pairs.

- Map the syndrome to a specific correction.

Pro tip: simulate small codes on open-source frameworks before scaling hardware experiments.

When an error flips a qubit, the syndrome acts like a diagnostic code on your laptop. You read it, apply the mapped fix, and restore the logical state without ever peeking at it directly in practice.

A Practical Toolkit: Exploring Quantum Error-Correcting Codes

Quantum computers are powerful—but fragile. A single stray interaction with the environment can corrupt a qubit (a quantum bit that can exist in superposition, meaning 0 and 1 at the same time). That’s where quantum error correction methods come in.

The Foundational Example: The Shor Code

The Shor Code is often the first stop on this journey. It encodes one logical qubit (the protected, information-carrying qubit) into nine physical qubits (actual hardware qubits). Why nine? Redundancy.

It layers protection: first against bit-flip errors (0 ↔ 1), then against phase-flip errors (sign changes in quantum states). Think of it as writing the same sentence three times, then encrypting each copy again—belt and suspenders.

A vs B comparison:

- Single qubit alone: Any small disturbance can destroy information.

- Shor-encoded qubit: Can survive one bit-flip and one phase-flip error.

Critics argue it’s too resource-heavy (nine qubits for one feels extravagant). They’re not wrong—hardware is scarce. But historically, the Shor Code proved something revolutionary: quantum information can be stabilized.

The Leading Candidate: Surface Codes

If Shor is the proof of concept, Surface Codes are the engineering favorite.

Here, qubits are arranged on a 2D lattice (a grid). Each qubit only interacts with its nearest neighbors—this property is called locality. That’s huge. Long-range qubit connections are technically difficult (and noisy).

Side-by-side:

- Shor Code: Conceptually elegant, but complex connectivity.

- Surface Code: Grid-based, neighbor-only interactions, hardware-friendly.

Surface Codes also have a high error threshold—meaning physical qubits can be fairly noisy before the logical qubit fails. That makes them practical for scaling toward the real world applications of high speed quantum processing.

The Underlying Framework: Stabilizer Codes

Both Shor and Surface codes belong to a broader system called stabilizer codes—a mathematical framework that uses measurement operators (stabilizers) to detect errors without measuring the quantum data directly.

Some argue the math is abstract and detached from hardware reality. Yet this abstraction is precisely the strength: it offers a systematic toolkit for designing new codes as hardware evolves (like upgrading from dial-up to fiber, but for qubits).

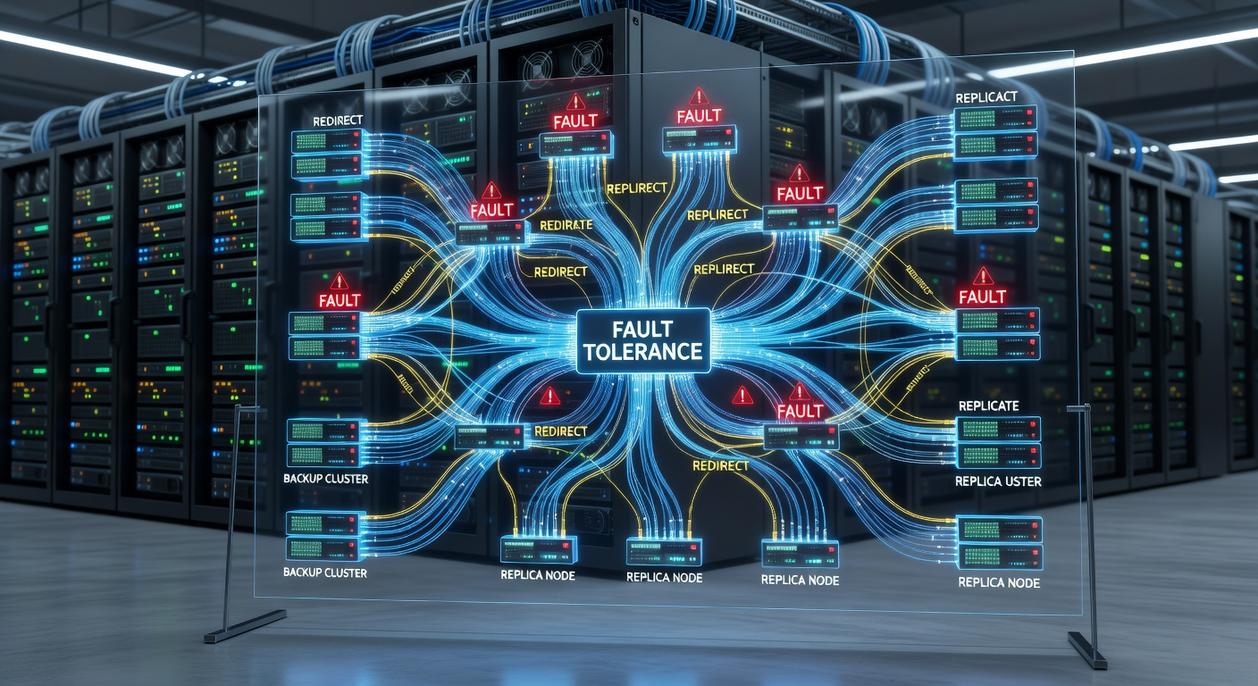

Beyond Codes: The Principles of Fault-Tolerant Design

Fault-tolerant quantum computation is more than clever math; it’s a full-system discipline. At its core, a design is fault-tolerant when errors are contained, like sparks trapped behind thick glass, never allowed to ignite a wildfire of cascading failures. However, quantum error correction methods alone aren’t enough. Gates must click with precision, measurements hum softly without shaking the state, and protocols anticipate faults before they spread. Otherwise, the cure introduces more noise than the disease. In practice, operation is engineered to localize damage, so one component doesn’t shatter algorithm, like a Death Star port.

Engineering the Quantum Future, One Corrected Qubit at a Time

Quantum decoherence—the loss of fragile quantum states through environmental noise—remains the barrier to scalable machines. In plain terms, qubits forget their information faster than we can use it (think of a Wi‑Fi signal dropping mid‑stream). Engineers counter this with redundancy—encoding one logical qubit across many qubits—plus entanglement, a linked state Einstein doubted. Surface codes and fault‑tolerant layouts are blueprints, and quantum error correction methods formalize how syndromes—error patterns—guide fixes. Some skeptics argue the overhead is costly. Yet studies show error rates drop exponentially. Pro tip: minimize noise sources early.

The Future Demands Precision and Preparation

You came here to understand where emerging technology is headed — from AI and robotics to the breakthroughs shaping quantum systems — and now you have a clearer view of what’s unfolding. The pace of innovation isn’t slowing down. If anything, it’s accelerating beyond what most people are prepared for.

The real challenge isn’t access to information. It’s staying ahead of it. With rapid advances in AI autonomy, next‑gen robotics, quantum breakthroughs, and especially quantum error correction methods, the gap between those who adapt and those who fall behind is widening.

If you want to stay informed, future‑ready, and technically sharp, don’t stop here. Subscribe for real‑time innovation alerts, explore our deep dives into cutting‑edge systems, and follow our practical tech maintenance tutorials designed to keep you competitive. We’re trusted by forward‑thinking technologists who rely on us for clear, actionable insights in a noisy digital world.

The future is being built right now. Stay ahead of it — subscribe today and make sure you’re not reacting to change, but leading it.