If you’re searching for a clear starting point in the world of data, this data science basics guide is built to give you exactly that. The field can feel overwhelming—buzzwords like machine learning, AI, big data, and algorithms are everywhere—but understanding the core principles doesn’t have to be complicated. This article breaks down the fundamental concepts, tools, and workflows that power modern data science, helping you see how everything connects in real-world applications.

We’ve structured this guide to align with what beginners and career-switchers actually need: plain-language explanations, practical context, and a logical progression from foundational concepts to applied techniques. Drawing from current industry practices, emerging tech trends, and hands-on analytical frameworks, this resource is designed to be both accessible and technically accurate.

By the end, you’ll understand what data science truly involves, the essential skills required, and how to take your next confident step into the field.

Decoding the Digital Universe: Your First Step into Data Science

Data science sounds intimidating, and honestly, parts of it still are. At its core, though, it’s about turning raw data—unprocessed facts and figures—into meaningful insights. The process blends statistics, coding, and domain knowledge, but experts still debate the “right” tools.

• Data cleaning: fixing errors and gaps.

• Modeling: using algorithms—step-by-step rules—to detect patterns.

Some claim you need a PhD; others argue curiosity matters more. I suspect the truth sits between. A data science basics guide helps, but understanding grows through experimentation. Think of it as learning to read patterns.

The Three Pillars: What Makes Data Science Work

Pillar 1 – Statistics & Mathematics

First and foremost, statistics and mathematics form the bedrock of data science. They allow you to collect, analyze, and interpret numerical data, identify patterns, test hypotheses (an educated guess you verify with data), and quantify uncertainty. In other words, they help you separate signal from noise. Without probability theory or regression analysis, predictions become guesswork. So, start with core concepts like distributions and confidence intervals—any solid data science basics guide should cover these thoroughly.

Pillar 2 – Computer Science & Programming

Next comes computer science—the engine that powers everything. Using programming languages like Python or R, data scientists build algorithms (step-by-step problem-solving instructions) to store, clean, process, and model massive datasets. Think millions of rows—far beyond spreadsheet territory. For example, recommendation systems like Netflix rely on scalable code, not manual analysis. Therefore, prioritize learning data structures and efficient coding practices; clean, optimized code saves hours (and headaches).

Pillar 3 – Domain Expertise

Finally, domain expertise provides essential context. Whether in healthcare, finance, or robotics, deep field knowledge ensures you ask the right questions and interpret results correctly. A model predicting hospital readmissions means little without clinical insight. So choose a domain, study its fundamentals, and stay curious—because even the smartest algorithm needs human judgment.

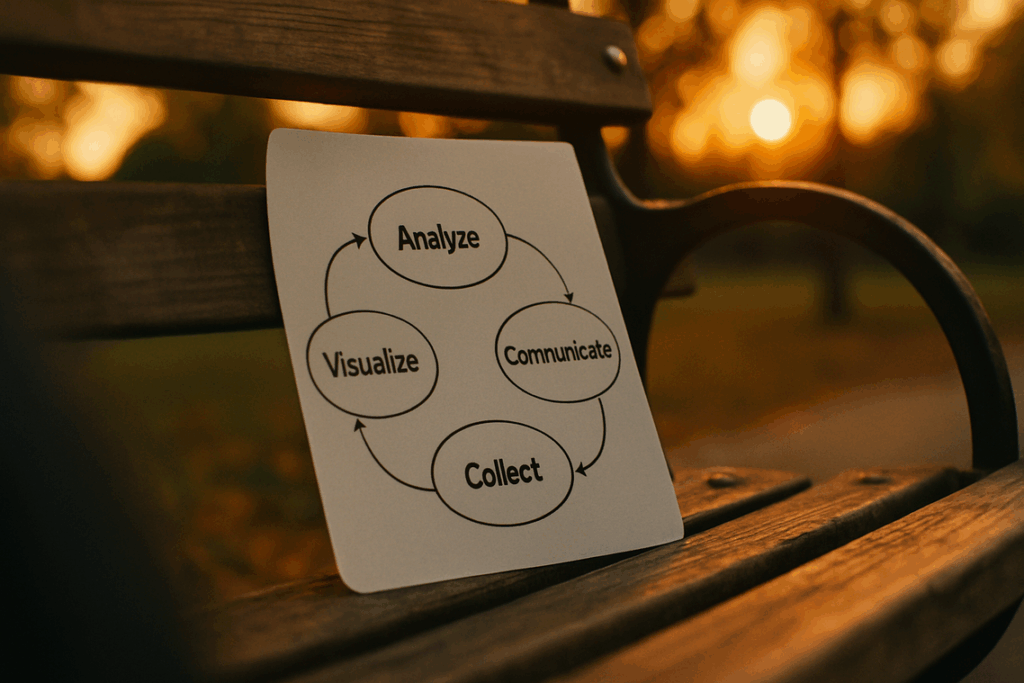

The Data Science Lifecycle: A Blueprint for Discovery

Step 1 – Problem Formulation & Data Collection

Every strong project begins with a clear, specific question. Instead of asking, “What’s in this dataset?” ask, “Why are customers churning?” Problem formulation defines scope, constraints, and success metrics. Data collection follows—pulling from databases, APIs, surveys, or sensors. If the question is flawed, everything downstream suffers (garbage in, garbage out). A practical data science basics guide always stresses clarity before code.

Step 2 – Data Cleaning & Preprocessing

This is often the longest phase—and the least glamorous. Data cleaning means correcting inconsistencies, removing duplicates, standardizing formats, and addressing missing values through deletion or imputation (statistically estimating gaps). Preprocessing may include normalization, encoding categorical variables, or feature engineering—creating new variables from raw inputs. For example, converting timestamps into “day of week” can reveal behavioral patterns. Well-prepared data dramatically improves model accuracy (IBM notes data scientists spend up to 80% of time cleaning data).

| Task | Purpose | Example |

|——|———-|———-|

| Remove duplicates | Prevent bias | Same user logged twice |

| Handle missing values | Maintain consistency | Fill age with median |

| Normalize data | Scale alignment | Income scaled 0–1 |

Step 3 – Exploratory Data Analysis (EDA)

EDA is the investigative stage. Analysts use visualizations, correlations, and summary statistics to uncover patterns and detect anomalies. A sudden spike in sales? That might align with a marketing campaign (or a data entry glitch). Tools like histograms and scatterplots help form hypotheses before modeling begins.

Step 4 – Modeling, Evaluation & Deployment

Here, algorithms such as regression or neural networks generate predictions. Models are evaluated using metrics like accuracy or F1-score to ensure reliability. After validation, deployment integrates the model into real systems—similar to practices outlined in the complete guide to understanding cloud computing, where infrastructure supports scalable decision-making. Pro tip: always monitor performance post-deployment to prevent model drift.

Core Terminology: Understanding the Language of Data

Algorithm: An algorithm is a step-by-step set of instructions a computer follows to complete a task—like sorting emails or recommending your next binge-watch. Google’s search algorithm, for example, processes billions of queries daily using defined ranking rules (Google Search Statistics, 2024). In retail, Amazon’s recommendation algorithm reportedly drives about 35% of total sales (McKinsey). In simple terms, it’s a digital recipe: clear inputs, defined steps, measurable outputs. (Yes, even your GPS rerouting you around traffic is running one.)

Machine Learning (ML): A subset of artificial intelligence where algorithms learn patterns from data instead of being explicitly programmed. According to IBM, organizations using ML report up to 20% improvements in operational efficiency. ML systems train on historical datasets, adjusting internal parameters to reduce prediction error.

Key types include:

- Supervised Learning: Trained on labeled data (e.g., spam vs. not spam).

- Unsupervised Learning: Detects hidden patterns without labels (e.g., customer segmentation).

- Reinforcement Learning: Learns through trial and error using rewards (think AlphaGo defeating world champions, per DeepMind research).

If you’ve read a data science basics guide, you’ve likely seen how these models power fraud detection, medical imaging, and self-driving systems.

Big Data: Refers to datasets defined by the three Vs—Volume (massive scale), Velocity (real-time generation), and Variety (multiple formats). By 2025, global data creation is projected to exceed 180 zettabytes (IDC). Traditional databases simply can’t keep up.

Data Visualization: The practice of turning raw numbers into charts, graphs, and maps so patterns become visible. Studies from MIT show the human brain processes visuals 60,000 times faster than text. A clear dashboard can reveal trends spreadsheets hide (and save hours of squinting at rows of numbers).

Essential Tools in the Data Scientist’s Toolkit

Programming languages like Python and R feel like well-worn instruments, their syntax clicking softly as Pandas and Scikit-learn slice through raw, messy datasets. Meanwhile, SQL, or Structured Query Language, acts as the steady hum beneath the surface, pulling precise records from relational databases. Then, cloud platforms such as AWS, Google Cloud, and Azure roar to life, offering scalable power for big data and complex machine learning models. For beginners, a data science basics guide helps translate these tools into practical, tactile workflows. Each command sharpens insight, like tuning a precise digital orchestra daily.

Your Next Frontier: Applying These Core Concepts

You now have the foundational map of data science, from its pillars to the lifecycle that turns questions into answers. However, mastery is less about memorizing tools and more about practicing inquiry, exploration, and modeling. A model, meaning a simplified representation of reality, helps you test ideas before risk. For example, analyze your expenses to predict savings trends. Start with a small project, following a data science basics guide, and iterate. Think of it like training Jarvis before building Iron Man’s suit. Pro tip: document assumptions. Then, scale toward robotics or quantum systems and solve real problems confidently in practice.

Master the Next Wave of Intelligent Innovation

You came here to cut through the noise and truly understand how modern data workflows, AI systems, and automation frameworks connect at a foundational level. Now you have that clarity.

The reality is simple: without strong fundamentals, emerging technologies like AI, robotics, and quantum computing feel overwhelming. That uncertainty slows innovation, creates costly mistakes, and keeps great ideas from becoming real-world solutions.

That’s exactly why building on a solid data science basics guide matters. It transforms confusion into capability and turns raw information into intelligent action.

Here’s your next move: revisit the core principles, apply them to a small real-world project, and start experimenting with automation or AI-driven analysis today. Don’t let rapid tech evolution leave you behind.

If you’re serious about staying ahead of intelligent systems and future-ready innovation, explore our latest tech breakdowns and step-by-step tutorials now. Join thousands of forward-thinking builders who rely on our insights to stay sharp, relevant, and ahead of the curve.